Civo - Kube three netes?

K3S, yeah… it’s even harder to pronounce than k8s isn’t it? Well, I’m not going to use this post to talk about the name, but rather the actual thing.

What is k3s?

K3s (created by Rancher) is basically kubernetes, but stripped down to a tiny little binary. It doesn’t require etcd to run as with full fledged kubernetes,

but can use one of many databases as the data store (the default is sqlite which works good for smaller clusters).

It requires very little resources to run the server, which makes it perfect for smaller machines, such as raspberry pi and other micro computers or vps’es.

Setting up kubernetes can be a pain, not k3s though, it’s actually quite easy!

So for running it as a development environment, for stage or even as a smaller production cluster, k3s is actually a perfect fit.

Now, you might think “but… there are managed kubernetes clusters now, where I don’t have to mess with a master server myself! Why would I care about k3s?!”.

Well, I got news for you!

Civo have recently started a beta with their new #kube100 managed k3s service, and it works great!

Setting up a new cluster takes about 2-3 minutes and from my testing, it runs just as fine as a managed k8s cluster at Digital Ocean or most other managed k8s services.

The tiny footprint of the k3s agents allows your nodes to use a lot more of its resources to focus on the important stuff, your containers.

If you wish to read a more in-depth comparision between k8s and k3s, check out Andy Jeffries (cto at Civo) blogpost about it!

Might be worth noting that I have not tested with very high loads, but rather just dev/stage env, but without any issues at all.

Other than the kube100 beta, Civo provides virtual servers for a decent price, their own CLI (available for most platforms) which allow you to provision servers by a few simple commands, and most other things you might need while working with a cloud.

Now, I will not make this post only about Civo, but I really enjoy their services and their community!

Civo is based in the UK, have an active slack community with high involvement from the company and are super fast with support if any issues arise.

K3S by yourself

I thought that I would first write about how to set up your own k3s cluster, then give you a short rundown of Civo’s implementation.

So we start from the base, with a manual setup!

So… K3S adverts itself as a “lightweight kubernetes”, something that is quite true, the binaries are about 40mb and runs on most standard architectures (amd64, armv7, aarch64).

They say that it is perfect for IoT and Edge, while I would dare to say that it works just fine for smaller production clusters too (if you have thousands of machines the power of k8s might have an advantage though).

As I said earlier, it uses sqlite by default as data store instead of etcd, but you can actually (with the use of the --datastore arguments on setup) use pgsql, mysql, mariadb, dqlite or even go with etcd as the datastore if you really want.

If no data store is defined, it will use an embedded sqlite database, which works fine for smaller clusters.

While setting up k8s and the CNI’s and everything is quite painful, k3s actually installs flannel by default. Not only that, it actually installs a few extra things too. This is less to my liking, but

if you are only trying to set up a quick k3s cluster to play with, just go with the standard installation.

Any extra programs you don’t want running is possible to disable with a --without flag, but I would recommend that you check out the documentation if you really want to do this.

Flannel can be turned off with --flannel-backend=none, but then you will have to configure your own CNI and mod it slightly for k3s.

K3s uses containerd as default container runtime. You can use docker for this, but sticking to containerd will allow for smaller disk requirements, and it works just as well as with docker (both uses the same image types, so you can still pull from docker hub or quay and similar).

Requirements

K3s have quite low requirements. You should have at the least 512Mb ram (while k8s asks for ~2gb per node to run decently), and at the least 1 cpu (haven’t tried without a cpu, but let me know if you manage!).

It needs to have TCP/6443 (api) TCP/10250 (kubelet) and UDP/8427 (if you use flannel) open on the server (which is what rancher decided to call the master node) and the agent (nodes) only requires the

networking port to be open (UDP/8427) (if you want kubelet to collect metrics, it also need TCP/10250 open).

For security reasons, make sure that the 8427 ports are only exposed to the other nodes, in a private network or with firewall rules. Exposing them to the public net is not a good idea as it allows you cluster to be accessed by nasty hackers!

If you wish to go HA, you should throw on more power on both the servers and the nodes, check out the docs if you want a better view of the specifications that are recommended for HA clusters.

This post will not go through HA, but rather just the basics thought.

Installing

Super simple

Installing k3s is quite easy, and one of the easiest ways is to use the k3s setup script with the following command:

curl -sfL https://get.k3s.io | sh -

If you - like me - don’t really like to run scripts without reading through them first, you should probably do that before running the script.

curl -sL https://get.k3s.io -o k3s.sh

cat k3s.sh

...

./k3s.sh

Any configuration you wish to do while using this script is done with the usage of environment variables. So setting up the “server” will be fine with the above script, setting up a node

requires K3S_URL=https://myserver:6443 K3S_TOKEN=mynodetoken (token can be found in /var/lib/rancher/k3s/server/node-token) env variables to be used.

When you are done running the script, you actually have a k3s server up and running!

Also super simple

Alex Ellis have created another super simple way of setting up k3s called k3sup (ketchup!). K3sup have a lot of additional functionality (for example app installations via arcade) which you might enjoy!

Setting up k3s via k3sup is even easier, as you don’t even have to ssh into a machine to run it, even that is done for you! After installing k3sup, you can install k3s by using the following small piece of code from your computer:

# Server

k3sup install --ip <server ip> --user <server user>

# Agent

k3sup join --ip <agent ip> --server-ip <server ip> --user <server user>

It doesn’t get much easier than this to be honest.

Super hard installation process!

If you really don’t want to use a shell script to set k3s up, there is a binary you can use instead.

Now, the binary is of course installed by both get.k3s.io and by k3sup, but this way, it’s done without manual labour (something that us developers just love!).

The binary can be downloaded from GitHub. Place it in your preferred bin dir and check out the commands:

# Do k3s server commands:

k3s server

# Do k3s agent commands

k3s agent

# Do kubectl stuff!

k3s kubectl

Any arguments you might need is easily accessible via k3s <command> --help.

Civo, managed k3s

Now when we have gone through the extremely hard setup process, we might as well take a look at the process of provisioning a new cluster at Civo!

I will not go through the registration process at Civo, if you can’t figure it out, I think kubernetes might be a bit too far for you at the moment, so I’ll start from the part where it actually gets interesting. Provisioning the servers!

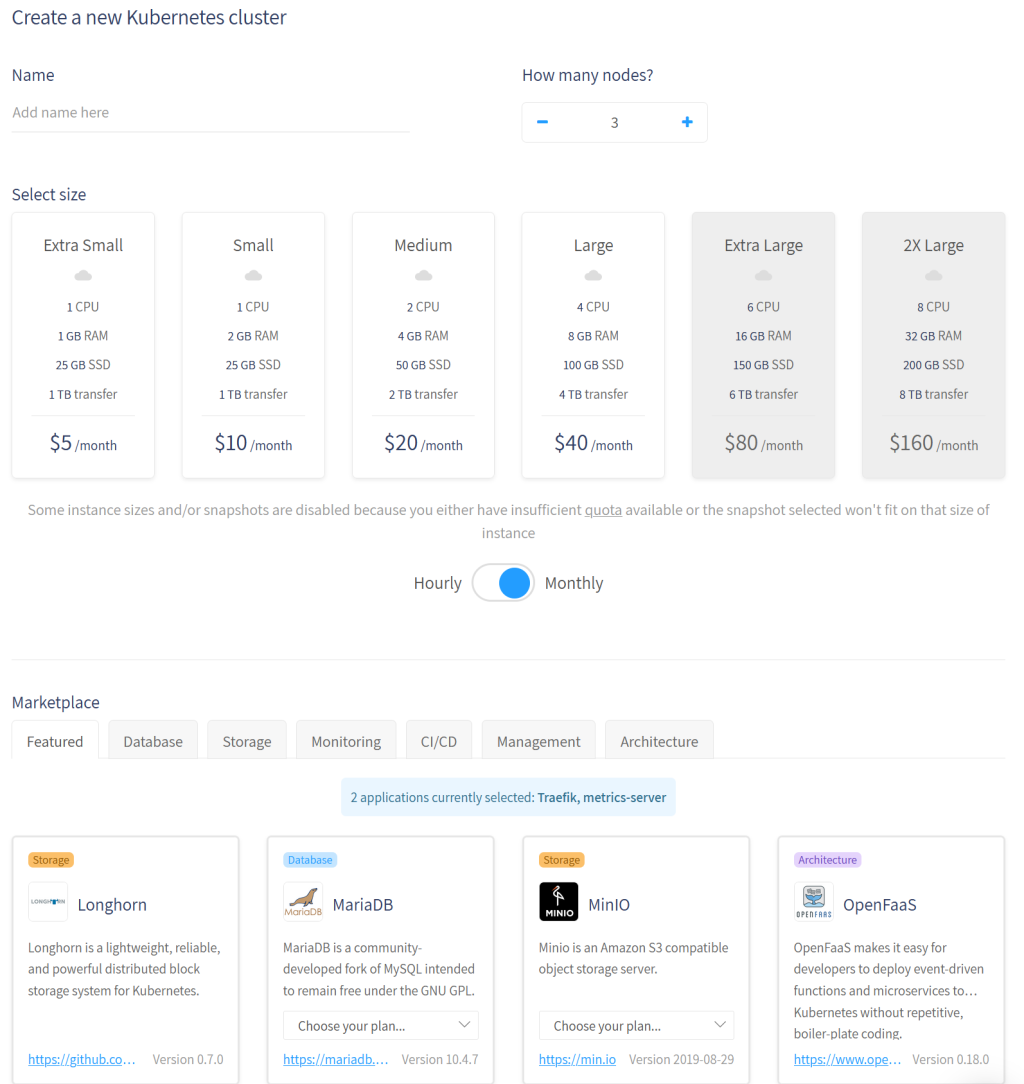

The first you will notice is that you have a decent selection of small range servers to use. The larger node sizes are not available (due to my quota limits!) and when you are setting up your cluster, you can only use one size for all. This is a bit annoying, but from what I have gathered, they are working on making “mix and match” possible in the near future.

Under the cost estimation (all resources are billed by the hour) you can see a list of different applications you can have pre-installed. If you, for example, need a storage plugin installed, Longhorn is available as a pre-installed package.

The “application marketplace” is open source, so you are able to request or even implement (and pull request) a new application that you feel is missing from the currently available applications.

At the bottom of the page, a Create button is clickable which allow you to finalize and start your own managed k3s cluster.

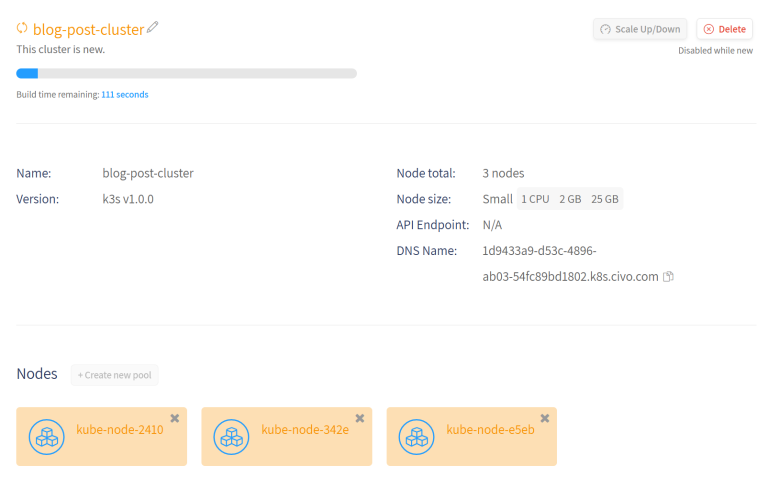

After waiting a couple of minutes while Civo creates the servers and finishes up your cluster, you can download your kubeconfig file and start use the cluster right away.

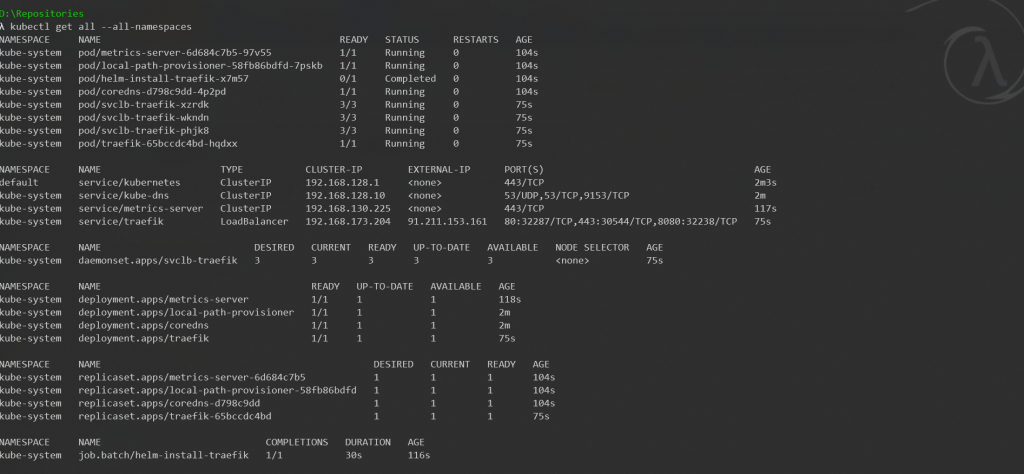

As you see, I decided to install metrics server and traefik by default. This sets up a loadbalancer on your master server (via helm) using a traefik service (no charge! k3s uses the server as proxy and agents as workers).

You can use Civos DNS configurations with your own domain if you wish, or even just point your domain to the IP as an A record or the DNS name as a CNAME. A basic firewall ruleset is also created for the

cluster, but you have access to change them to fit your liking.

Installing k3s is simple, but setting it up in the cloud via Civo is (if possible) even more simple!

Final words

There is honestly not much more to say about this topic. My intent was for this post to be a long one… but due to the ease of setup with both k3s and Civo, anything more than above would just be me ranting.

I really enjoy using Civo, it’s easy to use, and the community is great. So Sign up and take it for a spin! It’s still in beta, so you might encounter issues, but their slack channels are open for people in the beta, making

support easy to access and more fun than sending an email and wait for a day as with some larger providers.

If you wish to take Civo out for a spin, sign up at Civo #Kube100! You even get $70 worth of credits per month to play with!

I am not affiliated with Civo nor is this a paid promotion (well, more than the free credits from the #kube100 beta!).