I have been trying to write a post about Percona - and especially the operators - for a while.

It’s a tool which I first encountered a while back, while researching an alternative to KubeDB (another good project) after their licensing changes.

I never got too much into it back then, seeing I decided to go with managed databases at that point, but after visiting

Civo Navigate back in september and a followup chat with percona

I decided to dive a bit deeper into it.

I really like the ease of setting it up, and the fact that they support a wide array of database engines makes their

operators very useful.

In this post, we will focus on XtraDB, which is their mysql version with backup and clustering capabilities.

We will go through the installation of the operator as well as what I find most important in the

custom resource which will allow us to provision a full XtraDb cluster with backups and proxying.

This is what I’ve been using the most, and I’ll try to create a post at a later date with some benchmarks to show

how it compares with other databases.

Running databases in kubernetes (or docker) have earlier been a big no-no, this is not as much of an issue now ‘a days, especially

when using good storage types.

In this writeup, I’ll use my default storage class which on my k3s cluster is a mounted disk on Hetzner, they are

decent in speed, but seeing it’s just a demo, the speed doesn’t matter much!

Prerequisites

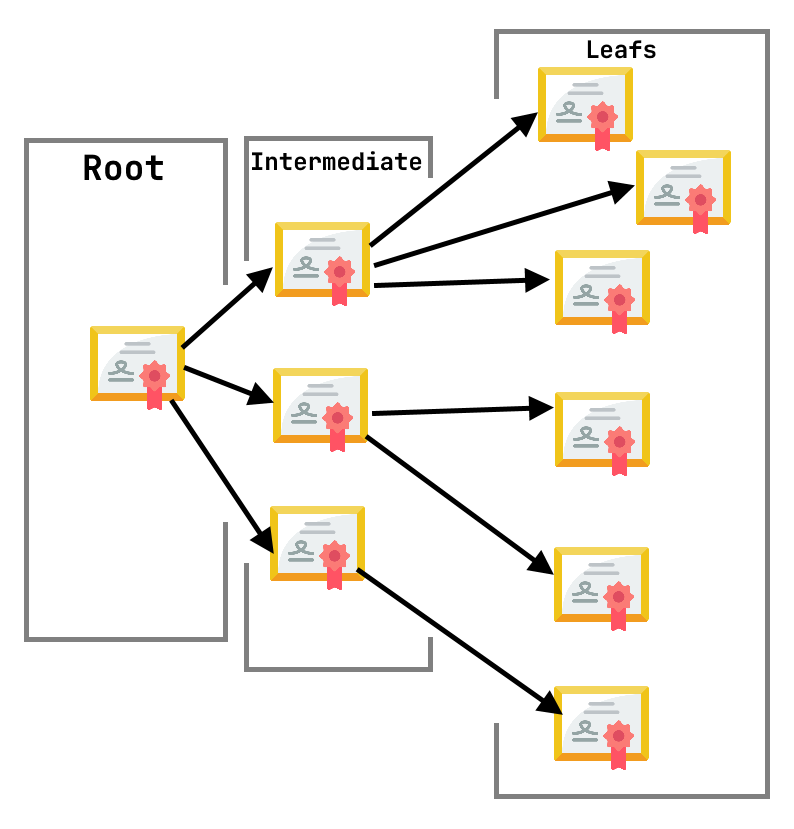

Percona xtradb makes use of cert-manager to generate TLS certificated, it will automatically

create an issuer (namespaced) for your resources, but you do need to have cert-manager installed.

This post will not cover the installation, and I would recommend that you take a look at the

official cert-manager doucmentation for installation instructions.

Helm installation

The first thing we have to do is to install the helm charts and deploy them to a kubernetes cluster.

In this post, we will as I said earlier, use the Mysql version of percona, and we will use the operator that is

supplied by percona.

If you want to dive deeper, you can find the documentation here!

Percona supplies their own helm charts for the operator via GitHub, so adding it to helm is easily done with

helm repo add percona https://percona.github.io/percona-helm-charts/

helm repo update

If you haven’t worked with helm before, the above snippet will add it to your local repository and allow you to install

charts from the repo we add.

If you just want to install the operator right away, you can do this by invoking the helm install command, but we

might want to look a bit at the values we can pass to the operator first, to customize it slightly.

The full chart can be found on GitHub, where you should

be able to see all the customizable values in the values.yml file (the ones set are the default values).

In the case of this operator, the default values are quite sane, it will create a service account and set up the RBAC

values required for it to monitor the CRD:s.

But, one thing that you might want to change is the value for watchAllNamespaces.

The default value here is false, which will only allow you to create new clusters in the same namespace

as the operator. This might be a good idea if you have multiple tenants in the cluster, and you don’t want

all of them to have access to the operator, while for me, making it a cluster-wide operator is far more useful.

To tell the helm chart that we want it to change the said value, we can either pass it directly in the install

command, or we can set up a values file for our specific installation.

When you change a lot of values, or you want to source-control your overrides, a file is for sure more useful.

To create an override file, you need to create a values.yml (you can actually name it whatever you want)

where you set the values you want to change, the format is the same as in the above repository values.yml file,

so if we only want to change the namespaces parameter it would look like this:

But any value in the default values file can be changed.

Installing the operator with the said values file is done by invoking the following command:

helm install percona-xtradb-operator percona/pxc-operator -f values.yml --namespace xtradb --create-namespace

The above command will install the chart as percona-xtradb-operator in the xtradb namespace.

You can change namespace as you wish, and it will create the namespace for you.

If you don’t want the namespace to be created (using another one or default) skip the --create-namespace flag.

Without using the namespace flag, the operator will be installed in the default namespace.

The file we changed is passed via the -f flag, and will override any values already defined in the default values file.

When we set the watchAllNamespaces value, the helm installation will create cluster wide roles and bindings, this does not happen if it’s not set

but is required for the operator to be able to look for and manage clusters in all namespaces.

If you don’t want to use a custom values file, passing values to helm is done easily by the following flags:

helm install percona-xtradb-operator percona/pxc-operator --namespace xtradb --set watchAllNamespaces=true

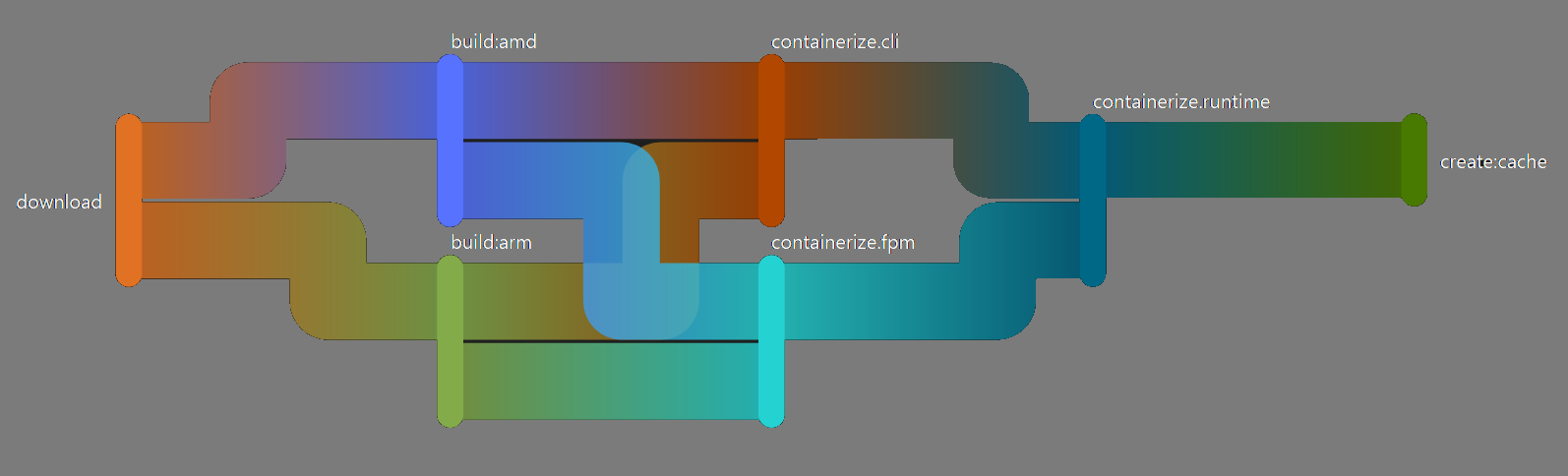

Multi Arch clusters

Currently, the operator images (and other as well) are only available for the AMD64 architecture, so in cases where you use nodes

which are based on another architecture (like me who use a lot of ARM64), you might want to set the nodeSelector

value in your override to only use amd64 nodes:

nodeSelector:

kubernetes.io/arch: amd64

To update your installation, instead of using install (which will make helm yell about already having it installed)

you use the upgrade command:

helm upgrade percona-xtradb-operator percona/pxc-operator -f values.yml --namespace xtradb

If you are lazy like me, you can actually use the above command with the --install flagg to install as well.

Percona xtradb CRD:s

As with most operators, the xtradb operator comes with a few custom resource definitions to allow easy

creation of new clusters.

We can install a new db cluster with helm as well, but I prefer to version control my resources

and I really enjoy using the CRD:s supplied by operators I use, so we will go with that!

So, to install a new percona xtradb cluster, we will create a new kubernetes resource as a yaml manifest.

The cluster uses the api version pxc/percona.com/v1 and the kind we are after is PerconaXtraDBCluster.

There are a lot of configuration that can be done, and a lot you really should look deeper into if you are

intending to run the cluster in production (especially the TLS options and how to encrypt the data at rest).

But to keep this post under a million words, I’ll focus on the things we need to just get a cluster up and running!

As with all kubernetes resources, we will need a bit of metadata to allow kubernetes to know where and what to create:

apiVersion: pxc.percona.com/v1

kind: PerconaXtraDBCluster

metadata:

name: cluster1-test

namespace: private

In the above manifest, I’m telling kubernetes that we want a PerconaXtraDBCluster set up in the private namespace

using the name cluster1-test.

There are a few extra finalizers we can add to the metadata to hint to the operator how we want it to handle removal of

clusters, the ones that are available are the following:

- delete-pods-in-order

- delete-pxc-pvc

- delete-proxysql-pvc

- delete-ssl

These might be important to set up correctly, as they will allow for the operator to remove PVC:s and other

configurations which we want it to remove on cluster deletion.

If you do want to save the claims and such, you should not include the finalizers in the metadata.

Sepc

After the metadata have been set, we want to start working on the specification of the resource.

There is a lot of customization tha can be done in the manifest, but the most important sections are the following:

tls (which allows us to use cert-manager to configure mTLS for the cluster)upgradeOptions (which allows us to set up upgrades of the running mysql servers)pxc (the configuration for the actual percona xtradb cluster)haproxy (configuration for the HAProxy which runs in front of the cluster)proxysql (configuration for the ProxySQL instances in front of the cluster)logcollector (well, for logging of course!)pmm (Percona monitor and management, which allows us to monitor the instances)backup (this one you can probably guess the usage for!)

TLS

In this writeup I will leave this with the default values (and not even add it to the manifest), that way

the cluster will create its own issuer and just issue tls certificates as it needs to, but if you want the

certificate to be a bit more constrained, you can here set boh which issuer to use (or create)

as well as the SANs to use.

UpgradeOptions

Keeping your database instances up to date automatically is quite a sweet feature. Now, we don’t always want to do this

seeing we sometimes want to use the exact same version in the cluster as in another database (if we got multiple environments for example)

or if we want to stay on a version we know is stable.

But, if we want to live on the edge and use the latest version, or stay inside a patch version of the current version we use

this section is very good.

There are three values that can be set in the upgradeOptions section, and they handle the scheduling, where to look and

the version constraints we want to sue.

upgradeOptions:

versionServiceEndpoint: ' https://check.percona.com'

apply: '8.0-latest'

schedule: '0 4 * * *'

The versionServiceEndpoint flag should probably always be https://check.percona.com, but if there are others

you can probably switch. I’m not sure about this though, so to be safe, I keep it at the default one!

apply can be used to set a constraint or disable the upgrade option all together.

If you don’t want your installations to upgrade, just set it to disabled, then it will not run at all.

In the above example, I’ve set the version constraint to use the latest version of the 8.0 mysql branch.

This can be set to a wide array of values, for more detailed info, I recommend checking the percona docs.

The Schedule is a cron-formatted value, in this case, at 4am every day, to continuously check, set it to * * * * *!

pxc

The pxc section of the manifest handles the actual cluster setup.

It got quite a few options, and I’ll only cover the ones I deem most important to just run a cluster, while

as said earlier, if you are intending to run this in production, make sure you check the documentation or read the

CRD specification for all available options.

spec:

pxc:

nodeSelector:

kubernetes.io/arch: amd64

size: 3

image: percona/percona-xtradb-cluster:8.0.32-24.2

autoRecovery: true

expose:

enabled: false

resources:

requests:

memory: 256M

cpu: 100m

limits:

memory: 512M

cpu: 200m

volumeSpec:

persistentVolumeClaim:

storageClassName: 'hcloud-volumes'

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

The size variable will tell the operator how many individual mysql instances we want to run.

3 is a good one, seeing most clustered programs prefer 3 or more instances.

The image should presumably be one of the percona images in this case, to allow updates and everything to work as smoothly as possible.

I haven’t peeked enough into the images, but I do expect that there is some custom things in the images

to make them run fine, which makes me want to stick to the default images rather than swapping to another!

autoRecovery should probably almost always be set to true, this will allow the Automatic Crash Recovery

functionality to work, which I expect is something most people prefer to have.

I would expect that you know how resources works in kubernetes, but I included it in the example to make sure that

its seen, as you usually want to be able to set those yourself. The values set above are probably

quite a bit low when you want to be able to use the database for more than just testing, so set them accordingly,

just remember that it’s for each container, not the whole cluster!

The volumeSpec is quite important. In the above example, I use my default volume type, which is a RWO type of disk

the size is set to 10Gi. The size should probably either be larger or possible to expand on short notice.

There are two more keys which can be quite useful if you wish to customize your database a bit more, and especially

if you want to finetune it.

Percona xtradb comes with quite sane defaults, but when working with databases, it’s not unusual that you need

to enter some custom params to the my.cnf file.

Environment variables

The percona pxc configuration does not currently allow bare environment variables (from what I can see), but this is not

a huge issue, seeing the spec allows for a envVarsSecret to be set.

The secret must of course be in the same namespace as the resources, but any variables in it will be loaded as

environment variables into the pod.

I’m not certain what environment variables are available for the pxc section, but will try to update this part when I got more

info on it.

Configuration

The configuration property expects a string, the string is a mysql configuration file, i.e, the values that you usually

put in the my.cnf file.

spec:

pxc:

configuration: |

[mysqld]

innodb_write_io_threads = 8

innodb_read_io_threads = 8

HAProxy and ProxySQL

Percona allows you to choose between two proxies to use for loadbalancing, which is quite nice.

The available proxies are HAProxy and ProxySQL, both

valid choices which are well tried in the industry for loadbalancing and proxying.

The one you choose should have the property enabled set to true, and the other one set to false.

The most “default” configuration you can use would look like this:

# With haproxy

spec:

haproxy:

nodeSelector:

kubernetes.io/arch: amd64

enabled: true

size: 3

image: percona/percona-xtradb-cluster-operator:1.13.0-haproxy

resources:

requests:

memory: 256M

cpu: 100m

# With proxysql

spec:

proxysql:

nodeSelector:

kubernetes.io/arch: amd64

enabled: true

size: 3

image: percona/percona-xtradb-cluster-operator:1.13.0-proxysql

resources:

requests:

memory: 256M

cpu: 100m

volumeSpec:

emptyDir: {}

The size should be at the least 2 (can be set to 1 if you use allowUnsafeConfigurations but that’s not recommended).

The image is just as with the pxc configuration most likely best to use the percona provided images (in this case 1.13.0, same version as the percona operator).

As always, the resources aught to be finetuned to fit your needs, the above is on the lower end, but could work okay for a smaller

cluster which does not have huge traffic.

Both of the sections allow for (just as with pxc section) to supply both environment variables via the envVarsSecret as

well as a configuration property. The configuration does of course differ and I would direct you to the proxy documentation

for more information about those!

Now, something quite important to note here is that if you supply a configuration file, you need to supply the full file,

it doesn’t merge the default file but replaces it in full.

So if you want to finetune the configuration, include the default configuration as well (and change it), this

applies to both haproxy and proxysql and will work the same if you use a configmap, secret or directly accessing

the configuration key.

The choice of proxy might be important to decide on at creation of the resource, if you use proxysql, you can

(with a restart of the pods) switch to haproxy, while if you choose haproxy, you can’t change the cluster to use

proxysql. So I would highly recommend that you decide which to use before creating the cluster.

There are a lot more variables you can set here, and all of them can be found at the documentation page.

LogCollector

Logs are nice, we love logs! Percona seems to as well, because they supply us with a

section for configuring a fluent bit log collector right in the manifest! No need for any sidecars,

just turn it on and start collecting :)

If you already have some type of logging system which captures all pods logs and such, this might not be useful

and you can set the enabled value to false and ignore this section.

The log collector specification is quite slim, and looks something like this:

spec:

logcollector:

enabled: true

image: percona/percona-xtradb-cluster-operator:1.13.0-logcollector

resources:

requests:

memory: 64M

cpu: 50m

configuration: ...

The default values might be enough, but the fluent bit documentation got quite a bit of customization available if you really want to!

PPM (Monitoring)

The xtradb server is able to push metrics and monitoring data to a PMM (percona monitoring & management) service,

now, this is not installed with the cluster and needs to be set up separately, but if you want to make use of this (which I recommend, seeing how important monitoring is!)

the documentation can be found here.

I haven’t researched this too much yet, but personally I would have loved to be able

to scrape the instances with prometheus and have my dashboards in my standard Grafana instance, which I will ask

percona about if it’s possible. In either case, I’ll update this part with more information when I figure it out!

Backups

Backups, one of the most important parts of keeping a database up and running without angry customers questioning you

about where their 5 years of data has gone after a database failure… Well, percona helps us with that too, thankfully!

The percona backup section allows us to define a bunch of different storage engines to use to store our backups, this is great, because we don’t

always want to store our backups on the same disks or systems as we run our cluster. The most useful way to

store backups is likely to store them in a s3 compatible storage, which can be done, but if you really want to

you can store them either in a PV or even on the local disk of the node.

We can even define multiple storages to use with different schedules!

spec:

backup:

image: perconalab/percona-xtradb-cluster-operator:main-pxc8.0-backup

storages:

s3Storage:

type: 's3'

nodeSelector:

kubernetes.io/arch: amd64

s3:

bucket: 'my-bucket'

credentialsSecret: 'my-credentials-secret'

endpointUrl: 'the-s3-service-i-like-to-use.com'

region: 'eu-east-1'

local:

type: 'filesystem'

nodeSelector:

kubernetes.io/arch: amd64

volume:

persistentVolumeClaim:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10G

schedule:

- name: 'daily'

schedule: '0 0 * * *'

keep: 3

storageName: s3Storage

- name: 'hourly'

schedule: '0 * * * *'

keep: 2

storageName: 'local'

In the above yaml, we have set up two different storage types. One s3 type and one filesystem type.

The s3 type is pointed to a bucket in my special s3-compatible storage while the filesystem one makes use of a persistent volume.

In the schedule section, we set it to create a daily backup to the s3 storage (and keep the 3 latest ones) while the

local storage one will keep 3 and run every hour.

Each section under storages will spawn a new container, so we can change the resources and such for each of them (and you might want to)

and they will by default re-try creation of the backup 6 times (can be changed by setting the spec.backup.backoffLimit to a higher value).

There is a lot of options for backups, and I would highly recommend to take a look at the docs for it!

Point in time

One thing that could be quite useful when working with backups for databases is point in time recovery.

Percona xtradb have this available in the backup section under the pitr section:

spec:

backup:

pitr:

storageName: 'local'

enabled: true

timeBetweenUploads: 60

It makes use of the same storage as defined in the storages section, and you can set the interval on PIT uploads.

Restoring a backup

Sometimes our databases fails very badly, or we get some bad data injected into it. In cases like those

we need to restore an earlier backup of said database.

I won’t cover this in this blogpost, as it’s too much to cover in a h4 in a tutorial like this,

but I’ll make sure to create a new post with disaster scenarios and how percona handles them.

If you really need to recover your data right now (before my next post) I would recommend that you either

read the Backup and restore and “How to restore backup to a new kubernetes-based environment”

section in the documentation.

The full chart

Now, when we have had a look at the different sections, we can set up our full chart:

apiVersion: pxc.percona.com/v1

kind: PerconaXtraDBCluster

metadata:

name: cluster2-test

namespace: private

spec:

upgradeOptions:

versionServiceEndpoint: ' https://check.percona.com'

apply: '8.0-latest'

schedule: '0 4 * * *'

pxc:

size: 3

nodeSelector:

kubernetes.io/arch: amd64

image: percona/percona-xtradb-cluster:8.0.32-24.2

autoRecovery: true

expose:

enabled: false

resources:

requests:

memory: 256M

cpu: 100m

limits:

memory: 512M

cpu: 200m

volumeSpec:

persistentVolumeClaim:

storageClassName: 'hcloud-volumes'

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

haproxy:

enabled: true

nodeSelector:

kubernetes.io/arch: amd64

size: 3

image: percona/percona-xtradb-cluster-operator:1.13.0-haproxy

resources:

requests:

memory: 256M

cpu: 100m

proxysql:

enabled: false

logcollector:

enabled: true

image: percona/percona-xtradb-cluster-operator:1.13.0-logcollector

resources:

requests:

memory: 64M

cpu: 50m

backup:

image: perconalab/percona-xtradb-cluster-operator:main-pxc8.0-backup

storages:

s3Storage:

type: 's3'

nodeSelector:

kubernetes.io/arch: amd64

s3:

bucket: 'my-bucket'

credentialsSecret: 'my-credentials-secret'

endpointUrl: 'the-s3-service-i-like-to-use.com'

region: 'eu-east-1'

local:

type: 'filesystem'

nodeSelector:

kubernetes.io/arch: amd64

volume:

persistentVolumeClaim:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10G

schedule:

- name: 'daily'

schedule: '0 0 * * *'

keep: 3

storageName: s3Storage

- name: 'hourly'

schedule: '0 * * * *'

keep: 2

storageName: 'local'

pitr:

storageName: 'local'

enabled: true

timeBetweenUploads: 60

Now, to get the cluster running, just invoke kubectl and it’s done!

kubectl apply -f my-awesome-cluster.yml

It takes a while for the databases to start up (there are a lot of components to start up!) so you might have to wait a few minutes

before you can start play around with the database.

Check the status of the resources with the get kubectl command:

kubectl get all -n private

NAME READY STATUS RESTARTS AGE

pod/cluster1-test-pxc-0 3/3 Running 0 79m

pod/cluster1-test-haproxy-0 2/2 Running 0 79m

pod/cluster1-test-haproxy-1 2/2 Running 0 78m

pod/cluster1-test-haproxy-2 2/2 Running 0 77m

pod/cluster1-test-pxc-1 3/3 Running 0 78m

pod/cluster1-test-pxc-2 3/3 Running 0 76m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/cluster1-test-pxc ClusterIP None <none> 3306/TCP,33062/TCP,33060/TCP 79m

service/cluster1-test-pxc-unready ClusterIP None <none> 3306/TCP,33062/TCP,33060/TCP 79m

service/cluster1-test-haproxy ClusterIP 10.43.45.157 <none> 3306/TCP,3309/TCP,33062/TCP,33060/TCP 79m

service/cluster1-test-haproxy-replicas ClusterIP 10.43.54.62 <none> 3306/TCP 79m

NAME READY AGE

statefulset.apps/cluster1-test-haproxy 3/3 79m

statefulset.apps/cluster1-test-pxc 3/3 79m

When all StatefulSets are ready, you are ready to go!

Accessing the database

When a configuration as the one above is applied, a few services will be created.

The service you most likely want to interact with is called <your-cluster-name>-haproxy (or -proxysql depending on proxy)

which will proxy your commands to the different backend mysql servers.

From within the cluster it’s quite easy, just accessing the service, while outside will

require a loadbalancer service (which can be defined in the manifest) alternatively a ingress which can

expose the service to the outer net.

If you wish to test your database from within the cluster, you can run the following command:

kubectl run -i --rm --tty percona-client --namespace private --image=percona:8.0 --restart=Never -- bash -il

percona-client:/$ mysql -h cluster1-haproxy -uroot -proot_password

The root password can be found in the <your-cluster-name>-secrets secret under the root key.

Final words

I really enjoy using percona xtradb, it allows for really fast setup of mysql clusters with backups enabled

and everything one might need.

But, I’m quite new to the tool, and might have missed something vital or important!

So please, let me know in the comments if something really important is missing or wrong.

]]>